futuresearch benchmarks

futuresearch benchmarks

Want to run on our benchmarks? Please contact us at evals@futuresearch.ai.

FutureSearch evaluates our research agents in three ways:

- Deep Research Bench (DRB) hard open-web tasks with curated answers.

- Bench to the Future 2 (BTF-2) "past-casting" forecasts measured by accuracy

- S&P 500 stock forecasts long-horizon revenue, margin, and payout forecasts

Deep Research Bench (DRB)

DRB benchmarks how well LLM agents do research on the web. Each of the 0 diverse, real-world tasks provides 10-100k webpages stored offline for search and reasoning, accompanied by carefully curated answers.

Bench to the Future 2 (BTF-2)

BTF-2 evaluates agents on 1,417 hard forecasting questions. Agents research and forecast offline against a frozen 15M-document corpus. Rationales and reasoning traces are evaluated for strategic reasoning.

DRB Leaderboard

Last updated:

Agent | Score | Cost ($) | Runtime (s) |

|---|---|---|---|

Scores averaged first per task category (radar chart), then across all tasks (table). Runtime is estimated from ReAct steps, not wall-clock time.

Papers

BTF-2 Leaderboard

Last updated: 2026-04-20

| Agent | Brier (accuracy) | Calibration | Refinement |

|---|---|---|---|

| FutureSearch Agent | 0.119 | 0.002 | 0.081 |

| Opus 4.6 Agent | 0.130 | 0.005 | 0.075 |

| Gemini 3.1 Pro Agent | 0.141 | 0.012 | 0.069 |

| GPT-5.4 Agent | 0.152 | 0.010 | 0.056 |

| Grok 4.20 Beta Agent | 0.165 | 0.003 | 0.039 |

Brier scores on 1,417 pastcasting questions (lower is better). The FutureSearch Agent is an ensemble significantly more accurate than any single frontier agent. Radar chart shows CHAMPS KNOW strategic emphasis (Borda scores, 8 of 10 dimensions).

Papers

Datasets

Best Accuracy per Dollar on DRB

Best Accuracy per Second on DRB

Evaluating forecasts on S&P 500 stock returns

View all 500 companies →On August 5, 2025, FutureSearch research agents forecast revenue, margin, and shareholder payout for each S&P 500 company through 2035.

To evaluate accuracy, we turned those forecasts into a dollar-neutral, industry-neutral paper portfolio, going long the most-undervalued name and short the bottom-half within each GICS industry.

The chart on the left is how this portfolio has done; NVIDIA on the right is a summary view of the forecasts on one company.

For about three weeks, from August 8 through September 2, 2025, this same Aug 5 batch was the publicly accessible Stockfisher product (top 10 companies free, the rest behind paid tiers), before being refreshed with a newer batch of forecasts.

Read more about our methodology for forecasting stock returns: can AI forecast stocks through fundamental analysis?, calculating intrinsic value from scratch, and a superforecasting approach to stock fundamentals.

Industry-neutral, dollar-neutral paper portfolio: long the most undervalued name and short the bottom half within each GICS industry (51 long bets, 231 short bets). Both baskets plotted as underlying returns; Net = Long − Short. No commissions, borrow cost, or dividends. Not investment advice.

Nvidia

(NVDA)Semiconductors & Semiconductor EquipmentForecasts as of August 6, 2025

RetroSearch

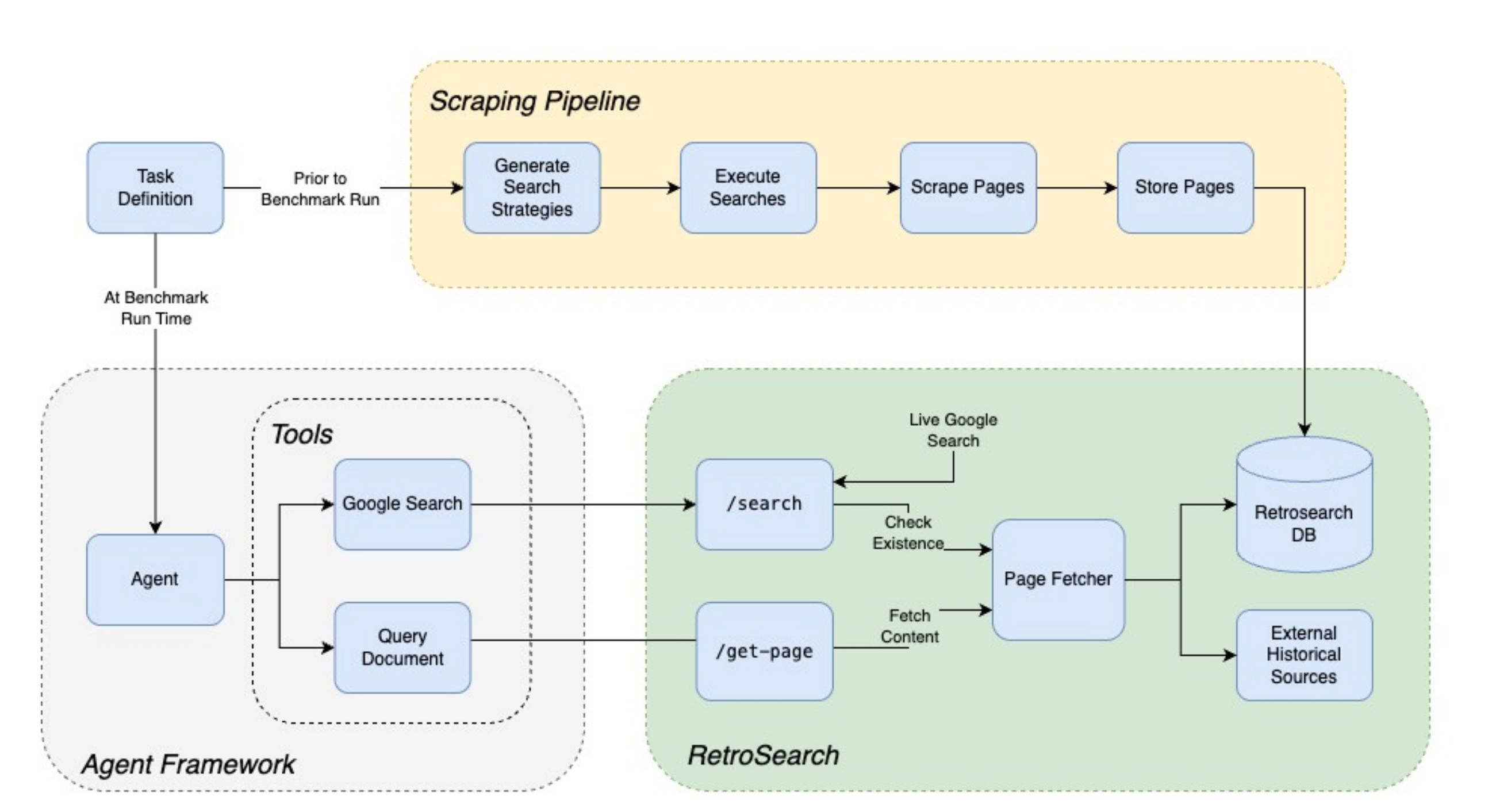

DRB and BTF-2 use RetroSearch, a system designed to serve agents a frozen, previously scraped version of the internet instead of the live pages, allowing reproducible runs even as the internet changes, and enabling forecasting tasks to be run as "pastcasting".

RetroSearch aims to emulate Google search (specifically, the Serper search API) as closely as possible, so as to minimize differences between live and "retro" agent runs. A single RetroSearch search query follows the following steps:

- Run a live Serper search for the query

- Look up pages obtained from live search in the RetroSearch database and other archive sources

- If the page is not found in the RetroSearch database, remove it from the results

- Write new snippets from a sample of page content using a simple LLM

- Return the results in the original format of the Google results

This approach ensures a search experience for agents that is consistent with real search, but backed exclusively by pages we have a frozen candidate for. The following diagram from the paper illustrates the process: